들어가며

재해, 혹은 순간적인 트래픽 증가로 노드가 다운되었다고 가정해볼까요.

MongoDB Replica set은 automatic failover를 통해 HA를 보장합니다.

그러나 Replica set의 형상 유지와, 기능의 정상 동작에는 majority 과반수 이상의 멤버의 healthy 가 요구되는데요.

이 문서를 통해 MongoDB Replica set의 멤버가 연속해서 다운되어 majority 를 만족하지 못한다면 레플리카 셋은 어떤 모습으로 남아있을지 확인합니다.

Replica set 구성

시뮬레이션을 위해 레플리카 셋을 먼저 구성합니다.

테스트 용도이므로 하나의 장비에서 포트를 달리한 3개의 mongod 프로세스로 구성합니다.

mongod 프로세스 구성

node 1: 27017

sudo mongod --replSet judeMdbRs --dbpath ~/data/rs1 --logpath ~/log/rs1/mongod.log --fork --port 27017 --oplogSize 200node2: 27018

sudo mongod --replSet judeMdbRs --dbpath ~/data/rs2 --logpath ~/log/rs2/mongod.log --fork --port 27018 --oplogSize 200node 3: 27019

sudo mongod --replSet judeMdbRs --dbpath ~/data/rs3 --logpath ~/log/rs3/mongod.log --fork --port 27019 --oplogSize 200

Replica set 구성

test> rsconf = {

... _id: "judeMdbRs",

... members: [

... {_id: 0, host: "localhost:27017"},

... {_id: 1, host: "localhost:27018"},

... {_id: 2, host: "localhost:27019"}

... ]}

{

_id: 'judeMdbRs',

members: [

{ _id: 0, host: 'localhost:27017' },

{ _id: 1, host: 'localhost:27018' },

{ _id: 2, host: 'localhost:27019' }

]

}

test> rs.initiate(rsconf)

{ ok: 1 }rs.initiate() 보조자에 레플리카 셋 구성 도큐먼트를 전달합니다.

shutdown 이전 레플리카 셋 상황

shutdown 작업 이전 시점에서 셋의 상황입니다.

judeMdbRs [direct: other] test> db.isMaster()

{

topologyVersion: {

processId: ObjectId("63e5e6ba24931a4e568de627"),

counter: Long("3")

},

hosts: [ 'localhost:27017', 'localhost:27018', 'localhost:27019' ],

setName: 'judeMdbRs',

setVersion: 1,

ismaster: true,

secondary: ,

me: 'localhost:27017',

lastWrite: {

...

}db.isMaster() 커맨드 결과입니다. 잠시 뒤 27017은 셋의 Primary로 올라서게 됩니다.

judeMdbRs [direct: secondary] test> rs.status()

{

set: 'judeMdbRs',

date: ISODate("2023-02-10T06:45:55.634Z"),

myState: 1,

term: Long("1"),

syncSourceHost: '',

syncSourceId: -1,

heartbeatIntervalMillis: Long("2000"),

majorityVoteCount: 2,

writeMajorityCount: 2,

~~~~ votingMembersCount: 3,

writableVotingMembersCount: 3,

optimes: {

lastCommittedOpTime: { ts: Timestamp({ t: 1676011552, i: 1 }), t: Long("1") },

lastCommittedWallTime: ISODate("2023-02-10T06:45:52.151Z"),

readConcernMajorityOpTime: { ts: Timestamp({ t: 1676011552, i: 1 }), t: Long("1") },

appliedOpTime: { ts: Timestamp({ t: 1676011552, i: 1 }), t: Long("1") },

durableOpTime: { ts: Timestamp({ t: 1676011552, i: 1 }), t: Long("1") },

lastAppliedWallTime: ISODate("2023-02-10T06:45:52.151Z"),

lastDurableWallTime: ISODate("2023-02-10T06:45:52.151Z")

},

lastStableRecoveryTimestamp: Timestamp({ t: 1676011511, i: 1 }),

electionCandidateMetrics: {

lastElectionReason: 'electionTimeout',

lastElectionDate: ISODate("2023-02-10T06:45:22.061Z"),

electionTerm: Long("1"),

lastCommittedOpTimeAtElection: { ts: Timestamp({ t: 1676011511, i: 1 }), t: Long("-1") },

lastSeenOpTimeAtElection: { ts: Timestamp({ t: 1676011511, i: 1 }), t: Long("-1") },

numVotesNeeded: 2,

priorityAtElection: 1,

electionTimeoutMillis: Long("10000"),

numCatchUpOps: Long("0"),

newTermStartDate: ISODate("2023-02-10T06:45:22.127Z"),

wMajorityWriteAvailabilityDate: ISODate("2023-02-10T06:45:23.380Z")

},

members: [

...

}rs.status() 명령어를 통해 레플리카 셋의 상태 정보를 확인합니다.

여기서 주목할 key는 다음과 같습니다.

majorityVoteCount: 2,

writeMajorityCount: 2,

votingMembersCount: 3,

writableVotingMembersCount: 3,

electionCandidateMetrics: {

lastElectionReason: 'electionTimeout',

lastElectionDate: ISODate("2023-02-10T06:45:22.061Z"),

electionTerm: Long("1"),

lastCommittedOpTimeAtElection: { ts: Timestamp({ t: 1676011511, i: 1 }), t: Long("-1") },

lastSeenOpTimeAtElection: { ts: Timestamp({ t: 1676011511, i: 1 }), t: Long("-1") },

numVotesNeeded: 2,

priorityAtElection: 1,

electionTimeoutMillis: Long("10000"),

numCatchUpOps: Long("0"),

newTermStartDate: ISODate("2023-02-10T06:45:22.127Z"),

wMajorityWriteAvailabilityDate: ISODate("2023-02-10T06:45:23.380Z")

},레플리카 셋에서 election과 합의에 관련된 주요 키 파라미터입니다.

3개의 멤버로 이루어진 레플리카 셋은 majority 과반수가 2 입니다.

majority가 확보되지 않는다면, 레플리카 셋은 election과 그를 통한 합의를 정상적으로 하지 못하게 되고,

그에 따라 주요 기능(automatic failover, read/write) 를 하지 못할 것이라고 가정하고 멤버 down 시나리오를 진행하겠습니다.

Replica set member down

멤버를 하나씩 다운시킵니다.

db.adminCommand({"shutdown": 1})

1차 Primary 다운

judeMdbRs [direct: primary] test> db.adminCommand({"shutdown": 1})

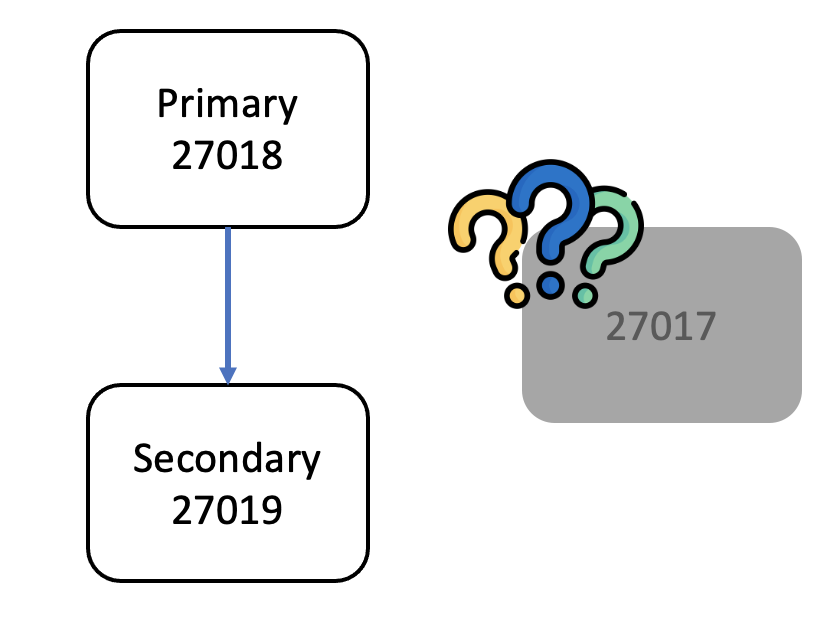

MongoNetworkError: connection 1 to 127.0.0.1:27017 closed현재 셋의 프라이머리 27017 을 다운시킵니다.

이후 임의의 멤버(27018)에 접근하여 db.isMaste()를 통해 현재 셋의 프라이머리를 확인합니다.

judeMdbRs [direct: primary] test> db.isMaster()

{

topologyVersion: {

processId: ObjectId("63e5e6bfdc075ad75b238b1b"),

counter: Long("7")

},

hosts: [ 'localhost:27017', 'localhost:27018', 'localhost:27019' ],

setName: 'judeMdbRs',

setVersion: 1,

ismaster: true,

secondary: false,

primary: 'localhost:27018',

me: 'localhost:27018',

...

}27018이 새로운 프라이머리로 선출되었습니다.

셋은 어떤 모습으로 남아 있을까요.

judeMdbRs [direct: primary] test> rs.status()

{

set: 'judeMdbRs',

date: ISODate("2023-02-10T07:24:39.509Z"),

myState: 1,

term: Long("2"),

syncSourceHost: '',

syncSourceId: -1,

heartbeatIntervalMillis: Long("2000"),

majorityVoteCount: 2,

writeMajorityCount: 2,

votingMembersCount: 3,

writableVotingMembersCount: 3,

optimes: {

lastCommittedOpTime: { ts: Timestamp({ t: 1676013878, i: 1 }), t: Long("2") },

lastCommittedWallTime: ISODate("2023-02-10T07:24:38.716Z"),

readConcernMajorityOpTime: { ts: Timestamp({ t: 1676013878, i: 1 }), t: Long("2") },

appliedOpTime: { ts: Timestamp({ t: 1676013878, i: 1 }), t: Long("2") },

durableOpTime: { ts: Timestamp({ t: 1676013878, i: 1 }), t: Long("2") },

lastAppliedWallTime: ISODate("2023-02-10T07:24:38.716Z"),

lastDurableWallTime: ISODate("2023-02-10T07:24:38.716Z")

},

lastStableRecoveryTimestamp: Timestamp({ t: 1676013858, i: 1 }),

electionCandidateMetrics: {

lastElectionReason: 'stepUpRequestSkipDryRun',

lastElectionDate: ISODate("2023-02-10T07:23:58.696Z"),

electionTerm: Long("2"),

lastCommittedOpTimeAtElection: { ts: Timestamp({ t: 1676013832, i: 1 }), t: Long("1") },

lastSeenOpTimeAtElection: { ts: Timestamp({ t: 1676013832, i: 1 }), t: Long("1") },

numVotesNeeded: 2,

priorityAtElection: 1,

electionTimeoutMillis: Long("10000"),

priorPrimaryMemberId: 0,

numCatchUpOps: Long("0"),

newTermStartDate: ISODate("2023-02-10T07:23:58.713Z"),

wMajorityWriteAvailabilityDate: ISODate("2023-02-10T07:23:59.707Z")

},

electionParticipantMetrics: {

votedForCandidate: true,

electionTerm: Long("1"),

lastVoteDate: ISODate("2023-02-10T06:45:22.064Z"),

electionCandidateMemberId: 0,

voteReason: '',

lastAppliedOpTimeAtElection: { ts: Timestamp({ t: 1676011511, i: 1 }), t: Long("-1") },

maxAppliedOpTimeInSet: { ts: Timestamp({ t: 1676011511, i: 1 }), t: Long("-1") },

priorityAtElection: 1

},

members: [

{

_id: 0,

name: 'localhost:27017',

health: 0,

state: 8,

stateStr: '(not reachable/healthy)',

uptime: 0,

...

},

{

_id: 1,

name: 'localhost:27018',

health: 1,

state: 1,

stateStr: 'PRIMARY',

...

},

{

_id: 2,

name: 'localhost:27019',

health: 1,

state: 2,

stateStr: 'SECONDARY',

...

}

],

ok: 1,

'$clusterTime': {

clusterTime: Timestamp({ t: 1676013878, i: 1 }),

signature: {

hash: Binary(Buffer.from("0000000000000000000000000000000000000000", "hex"), 0),

keyId: Long("0")

}

},

operationTime: Timestamp({ t: 1676013878, i: 1 })

}27017은 여전히 셋의 멤버로서 남아있습니다. 그러나 '(not reachable/healthy)' 로 통신하지 못하는 상태입니다.

물론 타임아웃 이후이지만, 레플리카 셋과 다른 멤버 입장에서는 일시적인 네트워크 파티션인지 노드 자체에 문제(결함)가 생겼는지 알 수 없습니다. 다시 돌아올지, 아닐지 알 수 없습니다.

여전히 셋의 멤버로 남아있기에 majority 과반수 또한 변하지 않았습니다. 여전히 2 입니다.

이 상황에서 프라이머리가 다시 한번 다운된다면 어떻게 될까요.

2차 Primary 다운

judeMdbRs [direct: primary] test> db.adminCommand({"shutdown": 1})

MongoNetworkError: connection 2 to 127.0.0.1:27018 closed다시 한 번 Primary를 다운시킵니다.

judeMdbRs [direct: secondary] test> db.isMaster()

{

topologyVersion: {

processId: ObjectId("63e5e6c3390bb6da198b6d13"),

counter: Long("9")

},

hosts: [ 'localhost:27017', 'localhost:27018', 'localhost:27019' ],

setName: 'judeMdbRs',

setVersion: 1,

ismaster: false,

secondary: true,

me: 'localhost:27019',

...

}남아 있는 멤버 27019에 접근하여 Primary 정보를 확인합니다.

27019는 자신을 Secondary로 인식하고 있습니다.

그렇다면 레플리카 셋의 상태를 확인해보겠습니다.

judeMdbRs [direct: secondary] test> rs.status()

{

set: 'judeMdbRs',

date: ISODate("2023-02-10T07:28:53.141Z"),

myState: 2,

term: Long("3"),

syncSourceHost: '',

syncSourceId: -1,

heartbeatIntervalMillis: Long("2000"),

majorityVoteCount: 2,

writeMajorityCount: 2,

votingMembersCount: 3,

writableVotingMembersCount: 3,

...

electionParticipantMetrics: {

votedForCandidate: true,

electionTerm: Long("2"),

lastVoteDate: ISODate("2023-02-10T07:23:58.701Z"),

electionCandidateMemberId: 1,

voteReason: '',

lastAppliedOpTimeAtElection: { ts: Timestamp({ t: 1676013832, i: 1 }), t: Long("1") },

maxAppliedOpTimeInSet: { ts: Timestamp({ t: 1676013832, i: 1 }), t: Long("1") },

priorityAtElection: 1

},

members: [

{

_id: 0,

name: 'localhost:27017',

health: 0,

state: 8,

stateStr: '(not reachable/healthy)',

uptime: 0,

...

},

{

_id: 1,

name: 'localhost:27018',

health: 0,

state: 8,

stateStr: '(not reachable/healthy)',

uptime: 0,

...

},

{

_id: 2,

name: 'localhost:27019',

health: 1,

state: 2,

stateStr: 'SECONDARY',

uptime: 2930,

...

}

],

ok: 1,

'$clusterTime': {

clusterTime: Timestamp({ t: 1676014032, i: 1 }),

signature: {

hash: Binary(Buffer.from("0000000000000000000000000000000000000000", "hex"), 0),

keyId: Long("0")

}

},

operationTime: Timestamp({ t: 1676014032, i: 1 })

}27017, 27018 은 여전히 셋의 멤버로서 남아있고, '(not reachable/healthy)' 로 통신하지 못하는 상태입니다.

멤버 수 변화가 없으니 majority도 변함이 없고,

새로운 프라이머리 선출 election, 합의를 위한 충분한 멤버가 확보되지 않아 failover도 이루어지지 않았습니다.

결과적으로 27019는 여전히 Secondary로 남아 있습니다. Primary가 없는 Replica set이 된 것입니다.

셋의 상태는 다음과 같습니다.

그렇다면 이 시점에서 로그는 어떻게 떨어졌을까요.

mongod.log 로그 파일을 확인하겠습니다.

mongod 로그 확인

마지막 홀로 남겨진 27019의 로그를 가장 먼저 확인해보겠습니다.

...

{"t":{"$date":"2023-02-11T19:18:02.260+09:00"},"s":"I", "c":"REPL_HB", "id":23974, "ctx":"ReplCoord-349","msg":"Heartbeat failed after max retries","attr":{"target":"localhost:27017","maxHeartbeatRetries":2,"error":{"code":6,"codeName":"HostUnreachable","errmsg":"Error connecting to localhost:27017 (127.0.0.1:27017) :: caused by :: Connection refused"}}}

{"t":{"$date":"2023-02-11T19:18:02.260+09:00"},"s":"I", "c":"REPL_HB", "id":23974, "ctx":"ReplCoord-353","msg":"Heartbeat failed after max retries","attr":{"target":"localhost:27018","maxHeartbeatRetries":2,"error":{"code":6,"codeName":"HostUnreachable","errmsg":"Error connecting to localhost:27018 (127.0.0.1:27018) :: caused by :: Connection refused"}}}

{"t":{"$date":"2023-02-11T19:18:02.421+09:00"},"s":"I", "c":"-", "id":4333222, "ctx":"ReplicaSetMonitor-TaskExecutor","msg":"RSM received error response","attr":{"host":"localhost:27017","error":"HostUnreachable: Error connecting to localhost:27017 (127.0.0.1:27017) :: caused by :: Connection refused","replicaSet":"judeMdbRs","response":"{}"}}

{"t":{"$date":"2023-02-11T19:18:02.421+09:00"},"s":"I", "c":"NETWORK", "id":4712102, "ctx":"ReplicaSetMonitor-TaskExecutor","msg":"Host failed in replica set","attr":{"replicaSet":"judeMdbRs","host":"localhost:27017","error":{"code":6,"codeName":"HostUnreachable","errmsg":"Error connecting to localhost:27017 (127.0.0.1:27017) :: caused by :: Connection refused"},"action":{"dropConnections":true,"requestImmediateCheck":true}}}

{"t":{"$date":"2023-02-11T19:18:02.422+09:00"},"s":"I", "c":"CONNPOOL", "id":22576, "ctx":"ReplicaSetMonitor-TaskExecutor","msg":"Connecting","attr":{"hostAndPort":"localhost:27018"}}

{"t":{"$date":"2023-02-11T19:18:02.422+09:00"},"s":"I", "c":"-", "id":4333222, "ctx":"ReplicaSetMonitor-TaskExecutor","msg":"RSM received error response","attr":{"host":"localhost:27018","error":"HostUnreachable: Error connecting to localhost:27018 (127.0.0.1:27018) :: caused by :: Connection refused","replicaSet":"judeMdbRs","response":"{}"}}

{"t":{"$date":"2023-02-11T19:18:02.422+09:00"},"s":"I", "c":"NETWORK", "id":4712102, "ctx":"ReplicaSetMonitor-TaskExecutor","msg":"Host failed in replica set","attr":{"replicaSet":"judeMdbRs","host":"localhost:27018","error":{"code":6,"codeName":"HostUnreachable","errmsg":"Error connecting to localhost:27018 (127.0.0.1:27018) :: caused by :: Connection refused"},"action":{"dropConnections":true,"requestImmediateCheck":true}}}하루 정도 불능한 상태로 셋과 mongod 인스턴스를 방치한 결과 로그는 수십만 줄 이상이 쌓였습니다. (…)

계속해서 셋의 멤버에게 health check를 하고 있고, connecting을 시도하고 있습니다. 그러나 닿을 수 없네요(unreachable)

{"t":{"$date":"2023-02-10T16:12:12.610+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013132:610170][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2584, snapshot max: 2584 snapshot count: 0, oldest timestamp: (1676012832, 1) , meta checkpoint timestamp: (1676013132, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:13:12.640+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013192:640272][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2599, snapshot max: 2599 snapshot count: 0, oldest timestamp: (1676012892, 1) , meta checkpoint timestamp: (1676013192, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:14:12.755+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013252:755361][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2614, snapshot max: 2614 snapshot count: 0, oldest timestamp: (1676012952, 1) , meta checkpoint timestamp: (1676013252, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:15:12.780+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013312:780652][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2629, snapshot max: 2629 snapshot count: 0, oldest timestamp: (1676013012, 1) , meta checkpoint timestamp: (1676013312, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:16:12.816+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013372:816697][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2644, snapshot max: 2644 snapshot count: 0, oldest timestamp: (1676013072, 1) , meta checkpoint timestamp: (1676013372, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:17:12.852+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013432:852777][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2659, snapshot max: 2659 snapshot count: 0, oldest timestamp: (1676013132, 1) , meta checkpoint timestamp: (1676013432, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:18:12.888+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013492:888546][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2674, snapshot max: 2674 snapshot count: 0, oldest timestamp: (1676013192, 1) , meta checkpoint timestamp: (1676013492, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:19:12.914+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013552:914870][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2689, snapshot max: 2689 snapshot count: 0, oldest timestamp: (1676013252, 1) , meta checkpoint timestamp: (1676013552, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:20:12.949+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013612:949123][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2704, snapshot max: 2704 snapshot count: 0, oldest timestamp: (1676013312, 1) , meta checkpoint timestamp: (1676013612, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:21:12.977+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013672:977802][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2719, snapshot max: 2719 snapshot count: 0, oldest timestamp: (1676013372, 1) , meta checkpoint timestamp: (1676013672, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:22:13.016+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013733:16410][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2734, snapshot max: 2734 snapshot count: 0, oldest timestamp: (1676013432, 1) , meta checkpoint timestamp: (1676013732, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:23:13.040+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013793:40852][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2749, snapshot max: 2749 snapshot count: 0, oldest timestamp: (1676013492, 1) , meta checkpoint timestamp: (1676013792, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:23:58.694+09:00"},"s":"I", "c":"REPL", "id":21343, "ctx":"RstlKillOpThread","msg":"Starting to kill user operations"}

{"t":{"$date":"2023-02-10T16:23:58.694+09:00"},"s":"I", "c":"REPL", "id":21344, "ctx":"RstlKillOpThread","msg":"Stopped killing user operations"}

{"t":{"$date":"2023-02-10T16:23:58.694+09:00"},"s":"I", "c":"REPL", "id":21340, "ctx":"RstlKillOpThread","msg":"State transition ops metrics","attr":{"metrics":{"lastStateTransition":"stepDown","userOpsKilled":0,"userOpsRunning":5}}}

{"t":{"$date":"2023-02-10T16:23:58.694+09:00"},"s":"I", "c":"COMMAND", "id":6015318, "ctx":"conn6","msg":"Yielding locks for prepared transactions."}

{"t":{"$date":"2023-02-10T16:23:58.694+09:00"},"s":"I", "c":"COMMAND", "id":6015319, "ctx":"conn6","msg":"Invalidating sessions for stepdown."}

{"t":{"$date":"2023-02-10T16:23:58.694+09:00"},"s":"I", "c":"REPL", "id":21358, "ctx":"conn6","msg":"Replica set state transition","attr":{"newState":"SECONDARY","oldState":"PRIMARY"}}

{"t":{"$date":"2023-02-10T16:23:58.696+09:00"},"s":"I", "c":"REPL", "id":5123007, "ctx":"conn6","msg":"Interrupting PrimaryOnlyService due to stepDown","attr":{"service":"TenantMigrationDonorService","numInstances":0,"numOperationContexts":0}}

{"t":{"$date":"2023-02-10T16:23:58.696+09:00"},"s":"I", "c":"REPL", "id":5123007, "ctx":"conn6","msg":"Interrupting PrimaryOnlyService due to stepDown","attr":{"service":"TenantMigrationRecipientService","numInstances":0,"numOperationContexts":0}}

{"t":{"$date":"2023-02-10T16:23:58.696+09:00"},"s":"I", "c":"REPL", "id":21347, "ctx":"conn6","msg":"Handing off election","attr":{"target":"localhost:27018"}}

{"t":{"$date":"2023-02-10T16:23:58.696+09:00"},"s":"I", "c":"COMMAND", "id":4695400, "ctx":"conn6","msg":"Terminating via shutdown command","attr":{"force":false,"timeoutSecs":15}}

{"t":{"$date":"2023-02-10T16:23:58.696+09:00"},"s":"I", "c":"REPL", "id":4794602, "ctx":"conn6","msg":"Attempting to enter quiesce mode"}

{"t":{"$date":"2023-02-10T16:23:58.696+09:00"},"s":"I", "c":"REPL", "id":4695102, "ctx":"conn6","msg":"Entering quiesce mode for shutdown","attr":{"quiesceTimeMillis":14997}}

{"t":{"$date":"2023-02-10T16:23:58.699+09:00"},"s":"I", "c":"NETWORK", "id":22944, "ctx":"conn5","msg":"Connection ended","attr":{"remote":"127.0.0.1:44788","uuid":"9f9f59dc-25e2-452b-a1e0-af1012fbd93a","connectionId":5,"connectionCount":18}}

{"t":{"$date":"2023-02-10T16:23:58.701+09:00"},"s":"I", "c":"REPL", "id":5972100, "ctx":"conn15","msg":"Voting yes in election"}

{"t":{"$date":"2023-02-10T16:23:58.701+09:00"},"s":"I", "c":"ELECTION", "id":23980, "ctx":"conn15","msg":"Responding to vote request","attr":{"request":"{ replSetRequestVotes: 1, setName: \"judeMdbRs\", dryRun: false, term: 2, candidateIndex: 1, configVersion: 1, configTerm: 1, lastAppliedOpTime: { ts: Timestamp(1676013832, 1), t: 1 } }","response":"{ term: 2, voteGranted: true, reason: \"\" }","replicaSetStatus":"Current replSetGetStatus output: { set: \"judeMdbRs\", date: new Date(1676013838701), myState: 2, term: 2, syncSourceHost: \"\", syncSourceId: -1, heartbeatIntervalMillis: 2000, majorityVoteCount: 2, writeMajorityCount: 2, votingMembersCount: 3, writableVotingMembersCount: 3, optimes: { lastCommittedOpTime: { ts: Timestamp(1676013832, 1), t: 1 }, lastCommittedWallTime: new Date(1676013832226), appliedOpTime: { ts: Timestamp(1676013832, 1), t: 1 }, durableOpTime: { ts: Timestamp(1676013832, 1), t: 1 }, lastAppliedWallTime: new Date(1676013832226), lastDurableWallTime: new Date(1676013832226) }, members: [ { _id: 0, name: \"localhost:27017\", health: 1.0, state: 2, stateStr: \"SECONDARY\", uptime: 0, optime: { ts: Timestamp(1676013832, 1), t: 1 }, optimeDate: new Date(1676013832000), lastAppliedWallTime: new Date(1676013832226), lastDurableWallTime: new Date(1676013832226), syncSourceHost: \"\", syncSourceId: -1, infoMessage: \"Could not find member to sync from\", configVersion: 1, configTerm: 1, self: true, lastHeartbeatMessage: \"\" }, { _id: 1, name: \"localhost:27018\", health: 1.0, state: 2, stateStr: \"SECONDARY\", uptime: 2327, optime: { ts: Timestamp(1676013832, 1), t: 1 }, optimeDurable: { ts: Timestamp(1676013832, 1), t: 1 }, optimeDate: new Date(1676013832000), optimeDurableDate: new Date(1676013832000), lastAppliedWallTime: new Date(1676013832226), lastDurableWallTime: new Date(1676013832226), lastHeartbeat: new Date(1676013838087), lastHeartbeatRecv: new Date(1676013837593), pingMs: 0, lastHeartbeatMessage: \"\", syncSourceHost: \"localhost:27017\", syncSourceId: 0, infoMessage: \"\", configVersion: 1, configTerm: 1 }, { _id: 2, name: \"localhost:27019\", health: 1.0, state: 2, stateStr: \"SECONDARY\", uptime: 2327, optime: { ts: Timestamp(1676013832, 1), t: 1 }, optimeDurable: { ts: Timestamp(1676013832, 1), t: 1 }, optimeDate: new Date(1676013832000), optimeDurableDate: new Date(1676013832000), lastAppliedWallTime: new Date(1676013832226), lastDurableWallTime: new Date(1676013832226), lastHeartbeat: new Date(1676013838088), lastHeartbeatRecv: new Date(1676013837595), pingMs: 0, lastHeartbeatMessage: \"\", syncSourceHost: \"localhost:27017\", syncSourceId: 0, infoMessage: \"\", configVersion: 1, configTerm: 1 } ] }"}}

{"t":{"$date":"2023-02-10T16:23:58.703+09:00"},"s":"I", "c":"REPL", "id":40447, "ctx":"TopologyVersionObserver","msg":"Stopped TopologyVersionObserver"}

{"t":{"$date":"2023-02-10T16:23:58.707+09:00"},"s":"I", "c":"REPL", "id":2903000, "ctx":"conn15","msg":"Restarting heartbeats after learning of a new primary","attr":{"myPrimaryId":"none","senderAndPrimaryId":1,"senderTerm":2}}

{"t":{"$date":"2023-02-10T16:23:58.708+09:00"},"s":"I", "c":"REPL", "id":21215, "ctx":"ReplCoord-11","msg":"Member is in new state","attr":{"hostAndPort":"localhost:27018","newState":"PRIMARY"}}

{"t":{"$date":"2023-02-10T16:23:58.711+09:00"},"s":"I", "c":"REPL", "id":21401, "ctx":"conn15","msg":"Scheduling heartbeat to fetch a newer config","attr":{"configTerm":2,"configVersion":1,"senderHost":"localhost:27018"}}

{"t":{"$date":"2023-02-10T16:23:58.712+09:00"},"s":"I", "c":"REPL", "id":6015317, "ctx":"ReplCoord-15","msg":"Setting new configuration state","attr":{"newState":"ConfigHBReconfiguring","oldState":"ConfigSteady"}}

{"t":{"$date":"2023-02-10T16:23:58.716+09:00"},"s":"I", "c":"REPL", "id":6015317, "ctx":"ReplCoord-15","msg":"Setting new configuration state","attr":{"newState":"ConfigSteady","oldState":"ConfigHBReconfiguring"}}

{"t":{"$date":"2023-02-10T16:23:58.716+09:00"},"s":"I", "c":"REPL", "id":21392, "ctx":"ReplCoord-15","msg":"New replica set config in use","attr":{"config":{"_id":"judeMdbRs","version":1,"term":2,"members":[{"_id":0,"host":"localhost:27017","arbiterOnly":false,"buildIndexes":true,"hidden":false,"priority":1,"tags":{},"secondaryDelaySecs":0,"votes":1},{"_id":1,"host":"l@@@

{"t":{"$date":"2023-02-10T16:23:58.716+09:00"},"s":"I", "c":"REPL", "id":21393, "ctx":"ReplCoord-15","msg":"Found self in config","attr":{"hostAndPort":"localhost:27017"}}

{"t":{"$date":"2023-02-10T16:23:59.698+09:00"},"s":"I", "c":"REPL", "id":21799, "ctx":"BackgroundSync","msg":"Sync source candidate chosen","attr":{"syncSource":"localhost:27018"}}

{"t":{"$date":"2023-02-10T16:23:59.698+09:00"},"s":"I", "c":"CONNPOOL", "id":22576, "ctx":"ReplCoordExternNetwork","msg":"Connecting","attr":{"hostAndPort":"localhost:27018"}}

{"t":{"$date":"2023-02-10T16:23:59.700+09:00"},"s":"I", "c":"REPL", "id":21088, "ctx":"BackgroundSync","msg":"Changed sync source","attr":{"oldSyncSource":"empty","newSyncSource":"localhost:27018"}}

{"t":{"$date":"2023-02-10T16:23:59.703+09:00"},"s":"W", "c":"NETWORK", "id":4615610, "ctx":"conn26","msg":"Failed to check socket connectivity","attr":{"error":{"code":6,"codeName":"HostUnreachable","errmsg":"Connection closed by peer"}}}

{"t":{"$date":"2023-02-10T16:23:59.703+09:00"},"s":"I", "c":"-", "id":20883, "ctx":"conn26","msg":"Interrupted operation as its client disconnected","attr":{"opId":85677}}

{"t":{"$date":"2023-02-10T16:23:59.703+09:00"},"s":"W", "c":"QUERY", "id":20478, "ctx":"conn26","msg":"getMore command executor error","attr":{"error":{"code":279,"codeName":"ClientDisconnect","errmsg":"operation was interrupted because a client disconnected"},"stats":{"stage":"COLLSCAN","nReturned":1246,"works":18906,"advanced":1246,"needTime":8830,"needYield":0,"saveState":8830,"restoreState":8829,"isEOF":0,"direction":"forward","minRecord":7198414627464544257,"docsExamined":1246},"cmd":{"getMore":1481583391641031458,"collection":"oplog.rs","batchSize":13981010,"maxTimeMS":5000,"term":1,"lastKnownCommittedOpTime":{"ts":{"$timestamp":{"t":1676011511,"i":1}},"t":-1}}}}

{"t":{"$date":"2023-02-10T16:23:59.703+09:00"},"s":"I", "c":"NETWORK", "id":22944, "ctx":"conn26","msg":"Connection ended","attr":{"remote":"127.0.0.1:55250","uuid":"650a23c4-a950-4aef-a78e-dd0d6f5a1c82","connectionId":26,"connectionCount":17}}

{"t":{"$date":"2023-02-10T16:24:08.703+09:00"},"s":"I", "c":"NETWORK", "id":22943, "ctx":"listener","msg":"Connection accepted","attr":{"remote":"127.0.0.1:39692","uuid":"9aa928fa-fca2-4758-9944-c1f5d9221410","connectionId":37,"connectionCount":18}}

{"t":{"$date":"2023-02-10T16:24:08.705+09:00"},"s":"I", "c":"NETWORK", "id":51800, "ctx":"conn37","msg":"client metadata","attr":{"remote":"127.0.0.1:39692","client":"conn37","doc":{"driver":{"name":"nodejs|mongosh","version":"4.13.0"},"os":{"type":"Linux","name":"linux","architecture":"x64","version":"5.4.0-132-generic"},"platform":"Node.js v16.19.0, LE (unified)","version":"4.13.0|1.6.2","application":{"name":"mongosh 1.6.2"}}}}

{"t":{"$date":"2023-02-10T16:24:08.707+09:00"},"s":"I", "c":"NETWORK", "id":22944, "ctx":"conn37","msg":"Connection ended","attr":{"remote":"127.0.0.1:39692","uuid":"9aa928fa-fca2-4758-9944-c1f5d9221410","connectionId":37,"connectionCount":17}}

{"t":{"$date":"2023-02-10T16:24:13.072+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013853:72660][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2774, snapshot max: 2774 snapshot count: 0, oldest timestamp: (1676013548, 1) , meta checkpoint timestamp: (1676013848, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"REPL", "id":4695103, "ctx":"conn6","msg":"Exiting quiesce mode for shutdown"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"COMMAND", "id":4784901, "ctx":"conn6","msg":"Shutting down the MirrorMaestro"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"REPL", "id":40441, "ctx":"conn6","msg":"Stopping TopologyVersionObserver"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"SHARDING", "id":4784902, "ctx":"conn6","msg":"Shutting down the WaitForMajorityService"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"CONTROL", "id":4784903, "ctx":"conn6","msg":"Shutting down the LogicalSessionCache"}

{"t":{"$date":"2023-02-10T16:24:13.699+09:00"},"s":"I", "c":"NETWORK", "id":20562, "ctx":"conn6","msg":"Shutdown: going to close listening sockets"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"NETWORK", "id":23017, "ctx":"listener","msg":"removing socket file","attr":{"path":"/tmp/mongodb-27017.sock"}}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"NETWORK", "id":4784905, "ctx":"conn6","msg":"Shutting down the global connection pool"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"CONTROL", "id":4784906, "ctx":"conn6","msg":"Shutting down the FlowControlTicketholder"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"-", "id":20520, "ctx":"conn6","msg":"Stopping further Flow Control ticket acquisitions."}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"REPL", "id":4784907, "ctx":"conn6","msg":"Shutting down the replica set node executor"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"ASIO", "id":22582, "ctx":"ReplNodeDbWorkerNetwork","msg":"Killing all outstanding egress activity."}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"CONTROL", "id":4784908, "ctx":"conn6","msg":"Shutting down the PeriodicThreadToAbortExpiredTransactions"}

{"t":{"$date":"2023-02-10T16:24:08.705+09:00"},"s":"I", "c":"NETWORK", "id":51800, "ctx":"conn37","msg":"client metadata","attr":{"remote":"127.0.0.1:39692","client":"conn37","doc":{"driver":{"name":"nodejs|mongosh","version":"4.13.0"},"os":{"type":"Linux","name":"linux","architecture":"x64","version":"5.4.0-132-generic"},"platform":"Node.js v16.19.0, LE (unified)","version":"4.13.0|1.6.2","application":{"name":"mongosh 1.6.2"}}}}

{"t":{"$date":"2023-02-10T16:24:08.707+09:00"},"s":"I", "c":"NETWORK", "id":22944, "ctx":"conn37","msg":"Connection ended","attr":{"remote":"127.0.0.1:39692","uuid":"9aa928fa-fca2-4758-9944-c1f5d9221410","connectionId":37,"connectionCount":17}}

{"t":{"$date":"2023-02-10T16:24:13.072+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013853:72660][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2774, snapshot max: 2774 snapshot count: 0, oldest timestamp: (1676013548, 1) , meta checkpoint timestamp: (1676013848, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"REPL", "id":4695103, "ctx":"conn6","msg":"Exiting quiesce mode for shutdown"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"COMMAND", "id":4784901, "ctx":"conn6","msg":"Shutting down the MirrorMaestro"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"REPL", "id":40441, "ctx":"conn6","msg":"Stopping TopologyVersionObserver"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"SHARDING", "id":4784902, "ctx":"conn6","msg":"Shutting down the WaitForMajorityService"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"CONTROL", "id":4784903, "ctx":"conn6","msg":"Shutting down the LogicalSessionCache"}

{"t":{"$date":"2023-02-10T16:24:13.699+09:00"},"s":"I", "c":"NETWORK", "id":20562, "ctx":"conn6","msg":"Shutdown: going to close listening sockets"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"NETWORK", "id":23017, "ctx":"listener","msg":"removing socket file","attr":{"path":"/tmp/mongodb-27017.sock"}}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"NETWORK", "id":4784905, "ctx":"conn6","msg":"Shutting down the global connection pool"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"CONTROL", "id":4784906, "ctx":"conn6","msg":"Shutting down the FlowControlTicketholder"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"-", "id":20520, "ctx":"conn6","msg":"Stopping further Flow Control ticket acquisitions."}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"REPL", "id":4784907, "ctx":"conn6","msg":"Shutting down the replica set node executor"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"ASIO", "id":22582, "ctx":"ReplNodeDbWorkerNetwork","msg":"Killing all outstanding egress activity."}{"t":{"$date":"2023-02-10T16:24:08.705+09:00"},"s":"I", "c":"NETWORK", "id":51800, "ctx":"conn37","msg":"client metadata","attr":{"remote":"127.0.0.1:39692","client":"conn37","doc":{"driver":{"name":"nodejs|mongosh","version":"4.13.0"},"os":{"type":"Linux","name":"linux","architecture":"x64","version":"5.4.0-132-generic"},"platform":"Node.js v16.19.0, LE (unified)","version":"4.13.0|1.6.2","application":{"name":"mongosh 1.6.2"}}}}

{"t":{"$date":"2023-02-10T16:24:08.707+09:00"},"s":"I", "c":"NETWORK", "id":22944, "ctx":"conn37","msg":"Connection ended","attr":{"remote":"127.0.0.1:39692","uuid":"9aa928fa-fca2-4758-9944-c1f5d9221410","connectionId":37,"connectionCount":17}}

{"t":{"$date":"2023-02-10T16:24:13.072+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"Checkpointer","msg":"WiredTiger message","attr":{"message":"[1676013853:72660][77076:0x7f3cb9c7a700], WT_SESSION.checkpoint: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2774, snapshot max: 2774 snapshot count: 0, oldest timestamp: (1676013548, 1) , meta checkpoint timestamp: (1676013848, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"REPL", "id":4695103, "ctx":"conn6","msg":"Exiting quiesce mode for shutdown"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"COMMAND", "id":4784901, "ctx":"conn6","msg":"Shutting down the MirrorMaestro"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"REPL", "id":40441, "ctx":"conn6","msg":"Stopping TopologyVersionObserver"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"SHARDING", "id":4784902, "ctx":"conn6","msg":"Shutting down the WaitForMajorityService"}

{"t":{"$date":"2023-02-10T16:24:13.698+09:00"},"s":"I", "c":"CONTROL", "id":4784903, "ctx":"conn6","msg":"Shutting down the LogicalSessionCache"}

{"t":{"$date":"2023-02-10T16:24:13.699+09:00"},"s":"I", "c":"NETWORK", "id":20562, "ctx":"conn6","msg":"Shutdown: going to close listening sockets"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"NETWORK", "id":23017, "ctx":"listener","msg":"removing socket file","attr":{"path":"/tmp/mongodb-27017.sock"}}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"NETWORK", "id":4784905, "ctx":"conn6","msg":"Shutting down the global connection pool"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"CONTROL", "id":4784906, "ctx":"conn6","msg":"Shutting down the FlowControlTicketholder"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"-", "id":20520, "ctx":"conn6","msg":"Stopping further Flow Control ticket acquisitions."}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"REPL", "id":4784907, "ctx":"conn6","msg":"Shutting down the replica set node executor"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"ASIO", "id":22582, "ctx":"ReplNodeDbWorkerNetwork","msg":"Killing all outstanding egress activity."}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"CONTROL", "id":4784908, "ctx":"conn6","msg":"Shutting down the PeriodicThreadToAbortExpiredTransactions"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"REPL", "id":4784909, "ctx":"conn6","msg":"Shutting down the ReplicationCoordinator"}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"REPL", "id":5074000, "ctx":"conn6","msg":"Shutting down the replica set aware services."}

{"t":{"$date":"2023-02-10T16:24:13.701+09:00"},"s":"I", "c":"REPL", "id":5123006, "ctx":"conn6","msg":"Shutting down PrimaryOnlyService","attr":{"service":"TenantMigrationDonorService","numInstances":0,"numOperationContexts":0}}

{"t":{"$date":"2023-02-10T16:24:13.702+09:00"},"s":"I", "c":"ASIO", "id":22582, "ctx":"TenantMigrationDonorServiceNetwork","msg":"Killing all outstanding egress activity."}

{"t":{"$date":"2023-02-10T16:24:13.702+09:00"},"s":"I", "c":"REPL", "id":5123006, "ctx":"conn6","msg":"Shutting down PrimaryOnlyService","attr":{"service":"TenantMigrationRecipientService","numInstances":0,"numOperationContexts":0}}

{"t":{"$date":"2023-02-10T16:24:13.702+09:00"},"s":"I", "c":"ASIO", "id":22582, "ctx":"TenantMigrationRecipientServiceNetwork","msg":"Killing all outstanding egress activity."}

{"t":{"$date":"2023-02-10T16:24:13.702+09:00"},"s":"I", "c":"REPL", "id":21328, "ctx":"conn6","msg":"Shutting down replication subsystems"}

{"t":{"$date":"2023-02-10T16:24:13.702+09:00"},"s":"I", "c":"REPL", "id":21302, "ctx":"conn6","msg":"Stopping replication reporter thread"}

{"t":{"$date":"2023-02-10T16:24:13.702+09:00"},"s":"I", "c":"REPL", "id":21760, "ctx":"SyncSourceFeedback","msg":"SyncSourceFeedback error sending update","attr":{"syncTarget":"localhost:27018","error":{"code":90,"codeName":"CallbackCanceled","errmsg":"Reporter no longer valid"}}}

{"t":{"$date":"2023-02-10T16:24:13.702+09:00"},"s":"I", "c":"REPL", "id":21303, "ctx":"conn6","msg":"Stopping replication fetcher thread"}

{"t":{"$date":"2023-02-10T16:24:13.702+09:00"},"s":"I", "c":"REPL", "id":21304, "ctx":"conn6","msg":"Stopping replication applier thread"}

{"t":{"$date":"2023-02-10T16:24:13.703+09:00"},"s":"I", "c":"REPL", "id":21094, "ctx":"BackgroundSync","msg":"Replication producer stopped after oplog fetcher finished returning a batch from our sync source. Abandoning this batch of oplog entries and re-evaluating our sync source"}

{"t":{"$date":"2023-02-10T16:24:13.703+09:00"},"s":"I", "c":"REPL", "id":21107, "ctx":"BackgroundSync","msg":"Stopping replication producer"}

{"t":{"$date":"2023-02-10T16:24:13.715+09:00"},"s":"I", "c":"REPL", "id":21225, "ctx":"OplogApplier-0","msg":"Finished oplog application"}

{"t":{"$date":"2023-02-10T16:24:13.715+09:00"},"s":"I", "c":"REPL", "id":5698300, "ctx":"conn6","msg":"Stopping replication applier writer pool"}

{"t":{"$date":"2023-02-10T16:24:13.715+09:00"},"s":"I", "c":"REPL", "id":21307, "ctx":"conn6","msg":"Stopping replication storage threads"}

{"t":{"$date":"2023-02-10T16:24:13.715+09:00"},"s":"I", "c":"ASIO", "id":22582, "ctx":"OplogApplierNetwork","msg":"Killing all outstanding egress activity."}

{"t":{"$date":"2023-02-10T16:24:13.715+09:00"},"s":"I", "c":"ASIO", "id":22582, "ctx":"conn6","msg":"Killing all outstanding egress activity."}

{"t":{"$date":"2023-02-10T16:24:13.716+09:00"},"s":"I", "c":"ASIO", "id":22582, "ctx":"ReplCoordExternNetwork","msg":"Killing all outstanding egress activity."}

{"t":{"$date":"2023-02-10T16:24:13.716+09:00"},"s":"I", "c":"CONNPOOL", "id":22572, "ctx":"ReplCoordExternNetwork","msg":"Dropping all pooled connections","attr":{"hostAndPort":"localhost:27018","error":"ShutdownInProgress: Shutting down the connection pool"}}

{"t":{"$date":"2023-02-10T16:24:13.717+09:00"},"s":"I", "c":"ASIO", "id":22582, "ctx":"ReplNetwork","msg":"Killing all outstanding egress activity."}

{"t":{"$date":"2023-02-10T16:24:13.717+09:00"},"s":"I", "c":"CONNPOOL", "id":22572, "ctx":"ReplNetwork","msg":"Dropping all pooled connections","attr":{"hostAndPort":"localhost:27019","error":"ShutdownInProgress: Shutting down the connection pool"}}

{"t":{"$date":"2023-02-10T16:24:13.717+09:00"},"s":"I", "c":"SHARDING", "id":4784910, "ctx":"conn6","msg":"Shutting down the ShardingInitializationMongoD"}

{"t":{"$date":"2023-02-10T16:24:13.717+09:00"},"s":"I", "c":"REPL", "id":4784911, "ctx":"conn6","msg":"Enqueuing the ReplicationStateTransitionLock for shutdown"}

{"t":{"$date":"2023-02-10T16:24:13.717+09:00"},"s":"I", "c":"-", "id":4784912, "ctx":"conn6","msg":"Killing all operations for shutdown"}

{"t":{"$date":"2023-02-10T16:24:13.717+09:00"},"s":"I", "c":"-", "id":4695300, "ctx":"conn6","msg":"Interrupted all currently running operations","attr":{"opsKilled":6}}

{"t":{"$date":"2023-02-10T16:24:13.717+09:00"},"s":"I", "c":"TENANT_M", "id":5093807, "ctx":"conn6","msg":"Shutting down all TenantMigrationAccessBlockers on global shutdown"}

{"t":{"$date":"2023-02-10T16:24:13.717+09:00"},"s":"I", "c":"COMMAND", "id":4784913, "ctx":"conn6","msg":"Shutting down all open transactions"}

{"t":{"$date":"2023-02-10T16:24:13.717+09:00"},"s":"I", "c":"REPL", "id":4784914, "ctx":"conn6","msg":"Acquiring the ReplicationStateTransitionLock for shutdown"}

{"t":{"$date":"2023-02-10T16:24:13.717+09:00"},"s":"I", "c":"INDEX", "id":4784915, "ctx":"conn6","msg":"Shutting down the IndexBuildsCoordinator"}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"REPL", "id":4784916, "ctx":"conn6","msg":"Reacquiring the ReplicationStateTransitionLock for shutdown"}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"REPL", "id":4784917, "ctx":"conn6","msg":"Attempting to mark clean shutdown"}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"NETWORK", "id":4784918, "ctx":"conn6","msg":"Shutting down the ReplicaSetMonitor"}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"REPL", "id":4784920, "ctx":"conn6","msg":"Shutting down the LogicalTimeValidator"}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"SHARDING", "id":4784921, "ctx":"conn6","msg":"Shutting down the MigrationUtilExecutor"}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"ASIO", "id":22582, "ctx":"MigrationUtil-TaskExecutor","msg":"Killing all outstanding egress activity."}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"COMMAND", "id":4784923, "ctx":"conn6","msg":"Shutting down the ServiceEntryPoint"}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"CONTROL", "id":4784925, "ctx":"conn6","msg":"Shutting down free monitoring"}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"CONTROL", "id":20609, "ctx":"conn6","msg":"Shutting down free monitoring"}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"CONTROL", "id":4784927, "ctx":"conn6","msg":"Shutting down the HealthLog"}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"CONTROL", "id":4784928, "ctx":"conn6","msg":"Shutting down the TTL monitor"}

{"t":{"$date":"2023-02-10T16:24:13.718+09:00"},"s":"I", "c":"INDEX", "id":3684100, "ctx":"conn6","msg":"Shutting down TTL collection monitor thread"}

{"t":{"$date":"2023-02-10T16:24:13.720+09:00"},"s":"W", "c":"QUERY", "id":20478, "ctx":"conn25","msg":"getMore command executor error","attr":{"error":{"code":11600,"codeName":"InterruptedAtShutdown","errmsg":"interrupted at shutdown"},"stats":{"stage":"COLLSCAN","nReturned":1248,"works":19280,"advanced":1248,"needTime":9016,"needYield":0,"saveState":9016,"restoreState":9015,"isEOF":0,"direction":"forward","minRecord":7198414627464544257,"docsExamined":1248},"cmd":{"getMore":8010053507779052125,"collection":"oplog.rs","batchSize":13981010,"maxTimeMS":5000,"term":1,"lastKnownCommittedOpTime":{"ts":{"$timestamp":{"t":1676011511,"i":1}},"t":-1}}}}

{"t":{"$date":"2023-02-10T16:24:13.721+09:00"},"s":"I", "c":"INDEX", "id":3684101, "ctx":"conn6","msg":"Finished shutting down TTL collection monitor thread"}

{"t":{"$date":"2023-02-10T16:24:13.721+09:00"},"s":"I", "c":"CONTROL", "id":4784929, "ctx":"conn6","msg":"Acquiring the global lock for shutdown"}

{"t":{"$date":"2023-02-10T16:24:13.721+09:00"},"s":"I", "c":"CONTROL", "id":4784930, "ctx":"conn6","msg":"Shutting down the storage engine"}

{"t":{"$date":"2023-02-10T16:24:13.721+09:00"},"s":"I", "c":"STORAGE", "id":22320, "ctx":"conn6","msg":"Shutting down journal flusher thread"}

{"t":{"$date":"2023-02-10T16:24:13.721+09:00"},"s":"I", "c":"STORAGE", "id":22321, "ctx":"conn6","msg":"Finished shutting down journal flusher thread"}

{"t":{"$date":"2023-02-10T16:24:13.721+09:00"},"s":"I", "c":"STORAGE", "id":22322, "ctx":"conn6","msg":"Shutting down checkpoint thread"}

{"t":{"$date":"2023-02-10T16:24:13.722+09:00"},"s":"I", "c":"STORAGE", "id":22323, "ctx":"conn6","msg":"Finished shutting down checkpoint thread"}

{"t":{"$date":"2023-02-10T16:24:13.722+09:00"},"s":"I", "c":"STORAGE", "id":20282, "ctx":"conn6","msg":"Deregistering all the collections"}

{"t":{"$date":"2023-02-10T16:24:13.722+09:00"},"s":"I", "c":"STORAGE", "id":22372, "ctx":"OplogVisibilityThread","msg":"Oplog visibility thread shutting down."}

{"t":{"$date":"2023-02-10T16:24:13.722+09:00"},"s":"I", "c":"STORAGE", "id":22261, "ctx":"conn6","msg":"Timestamp monitor shutting down"}

{"t":{"$date":"2023-02-10T16:24:13.723+09:00"},"s":"I", "c":"STORAGE", "id":22317, "ctx":"conn6","msg":"WiredTigerKVEngine shutting down"}

{"t":{"$date":"2023-02-10T16:24:13.723+09:00"},"s":"I", "c":"STORAGE", "id":22318, "ctx":"conn6","msg":"Shutting down session sweeper thread"}

{"t":{"$date":"2023-02-10T16:24:13.723+09:00"},"s":"I", "c":"STORAGE", "id":22319, "ctx":"conn6","msg":"Finished shutting down session sweeper thread"}

{"t":{"$date":"2023-02-10T16:24:13.725+09:00"},"s":"I", "c":"STORAGE", "id":4795902, "ctx":"conn6","msg":"Closing WiredTiger","attr":{"closeConfig":"leak_memory=true,"}}

{"t":{"$date":"2023-02-10T16:24:13.727+09:00"},"s":"I", "c":"STORAGE", "id":22430, "ctx":"conn6","msg":"WiredTiger message","attr":{"message":"[1676013853:727703][77076:0x7f3caa259700], close_ckpt: [WT_VERB_CHECKPOINT_PROGRESS] saving checkpoint snapshot min: 2778, snapshot max: 2778 snapshot count: 0, oldest timestamp: (1676013548, 1) , meta checkpoint timestamp: (1676013848, 1) base write gen: 1"}}

{"t":{"$date":"2023-02-10T16:24:13.764+09:00"},"s":"I", "c":"STORAGE", "id":4795901, "ctx":"conn6","msg":"WiredTiger closed","attr":{"durationMillis":39}}

{"t":{"$date":"2023-02-10T16:24:13.764+09:00"},"s":"I", "c":"STORAGE", "id":22279, "ctx":"conn6","msg":"shutdown: removing fs lock..."}

{"t":{"$date":"2023-02-10T16:24:13.764+09:00"},"s":"I", "c":"-", "id":4784931, "ctx":"conn6","msg":"Dropping the scope cache for shutdown"}

{"t":{"$date":"2023-02-10T16:24:13.764+09:00"},"s":"I", "c":"FTDC", "id":4784926, "ctx":"conn6","msg":"Shutting down full-time data capture"}

{"t":{"$date":"2023-02-10T16:24:13.764+09:00"},"s":"I", "c":"FTDC", "id":20626, "ctx":"conn6","msg":"Shutting down full-time diagnostic data capture"}

{"t":{"$date":"2023-02-10T16:24:13.770+09:00"},"s":"I", "c":"CONTROL", "id":20565, "ctx":"conn6","msg":"Now exiting"}

{"t":{"$date":"2023-02-10T16:24:13.770+09:00"},"s":"I", "c":"CONTROL", "id":23138, "ctx":"conn6","msg":"Shutting down","attr":{"exitCode":0}}최초의 Primary 였던 27017의 journal 로그입니다.

shutdown 을 위해 커넥션을 하나씩 종료한 뒤, 최종적으로 shutdown을 하여 프로세스를 종료한 것을 확인할 수 있습니다.

결론

Replica set의 멤버를 하나씩 shutdown 하여 majority 미만의 멤버가 셋에 남게 되는 상황의 시뮬레이션을 진행했습니다.

레플리카 셋의 election과 합의를 통해 동작하는 기능이 정상적으로 작동하지 않았고, 결론적으로 Primary가 없는 레플리카 셋으로 남아 불능이 된 것을 확인할 수 있었습니다.

'mongoDB' 카테고리의 다른 글

| MongoDB 영속성과 read/write concern (0) | 2023.07.09 |

|---|